Integrations

What are Integrations?

Integrations are how Firetiger connects to your existing infrastructure, tools, and data sources. They serve two purposes: they extend the capabilities of your Agents by granting them access to external tools, and they provide data sources that feed telemetry into Firetiger for analysis.

You can manage your integrations at /integrations.

Connections

Connections are integrations that give Firetiger ongoing access to an external service. When you set up a connection, you provide credentials and configuration, and Firetiger maintains the link on your behalf.

Connections are used in two ways:

- As agent tools — Agents can use connections to interact with external systems. For example, a GitHub connection lets agents read repositories and create issues, while a Slack connection lets them post messages to channels.

- As data sources — Some connections pull data into Firetiger on an ongoing basis. A PostgreSQL connection, for instance, lets agents query your database directly.

For a full list of available connections, see the Integrations section of the docs.

Ingest Sources

Ingest sources are integrations that push telemetry data — logs, traces, and metrics — into Firetiger. These are typically configured on the sending side, pointed at your Firetiger ingest endpoint.

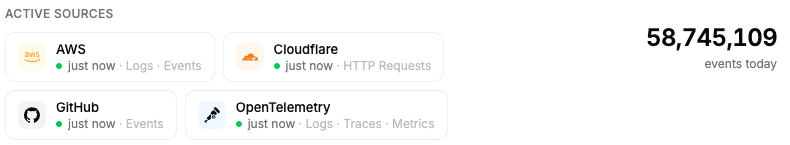

You can see the health and latency of your active ingest sources at /integrations/ingest:

This page shows which sources are actively sending data, their recent latency, and any delivery issues. It’s a good first place to check if you suspect telemetry isn’t arriving.

For setup guides on specific ingest sources, see the Infrastructure and Observability integration docs.

Credential Security

When you configure an integration, any secret values — API keys, database passwords, OAuth tokens — are handled with care. They are never visible to LLMs and are never stored at rest outside of encrypted cloud secrets managers.

Here’s how it works: when an agent runs, its sandboxed environment receives dummy placeholder credentials. An isolated proxy sits between the sandbox and the external service. The proxy intercepts outgoing requests and rewrites the dummy credentials with the real values, which only it can access. The agent’s LLM never sees or handles actual secrets — it only ever works with the placeholders.

This means that even if an agent’s behavior were compromised, the real credentials remain inaccessible to the model.